Deploying Kubeflow on AWS, GCP, Azure of on-prem: It is a lot more than just downloading and installing open source Kubeflow

Over the past two plus years Kubeflow has come together a long way in bringing together many open source innovations to implement a cost effective AI/ML platform. These include KF Pipelines, KF Serving, AutoML, Istio from Kubernetes in addition to framework operators like Tensorflow, PyTorch, XGBoost, SciKit. However, “implementing” Kubeflow with your preferred cloud or on-prem environment still requires significant work still, with few people, for many months. The work comes in terms of:

1. Enterprise integration with user authentication and onboarding

2. Enterprise integration with version control systems

3. Storage connectivity esp on Azure with no S3 interface or on-prem with NFS or Ceph

4. GPU allocation and pooling/sharing for users or groups and many other such challenges

5. Once all the items above are done the final challenge is in the enterprise resiliency and uptime of the system.

Hence deploying Kubeflow successfully and being able to operationalize your data and model prep, tuning, deployment and monitoring while managing security, compliance and governance is still rather challenging. Doing it yourself for every new installation can be many months of work for several people. This is for every new organization, almost every new installation. The productivity and time loss is significant and all the cost savings of using Kubeflow gets compensated by the increased expense and time that can be in hundreds of thousands of dollars and many months per installation. For this reason many Kubeflow installation projects at large Fortune 100 companies have stalled.

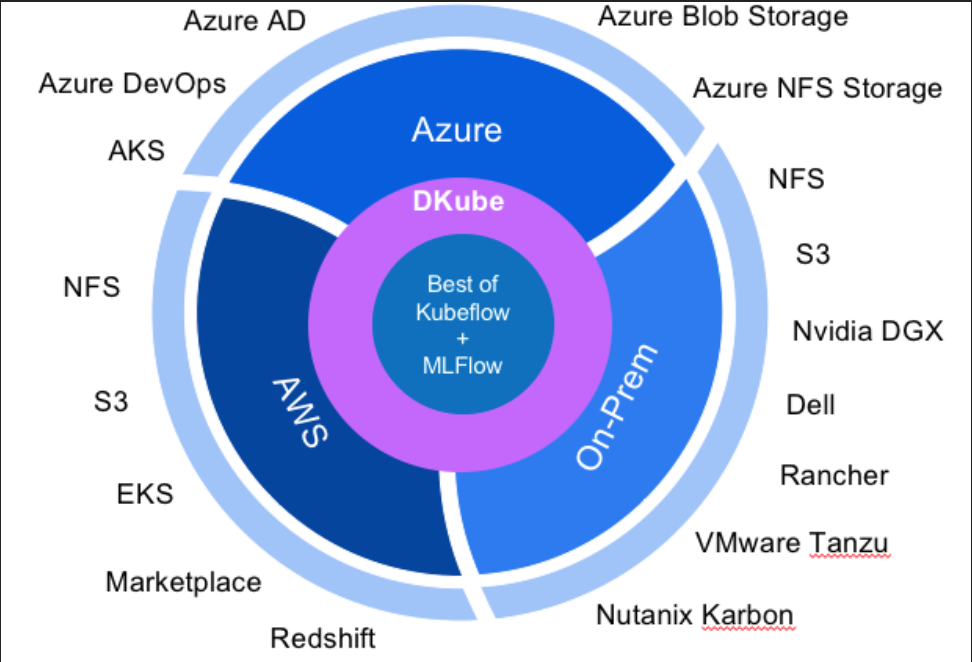

But there is some good news on the Kubeflow movement. New AL/ML platforms that are built from the ground up on top of Kubeflow natively can address this challenge for you. DKube from One Convergence™ Inc., for example has built a standard Kubeflow package with a better and more modern UI and it integrates with AWS EKS, Azure AKS or any cloud or on-prem with Rancher Kubernetes EE, Nutanix Karbon or VMWare Tanzu distribution of K8S. As shown in the graphic below it integrates with Azure Blob or Azure NFS, AWS S3, On-prem S3/NFS/Ceph storage. It integrates with Active Directory or LDAP authentication in any of the cloud or on-prem installations. It integrates with Git, GitOps, Bitbucket, Azure DevOps version control systems. It integrates with healthcare data sources like Arvados or Flywheel. In other words you get a shrink wrapped package that with few simple commands at install and config time can get you going in AWS, Azure, GCP, or on-prem on a Kubernetes distribution of your choice. From install start to users onboarded can be as quick as a few hours, or a day depending on the complexity of the cluster.

Consider this as if you were thinking to build a custom home vs purchasing a home from a volume home builder. For a custom home one gets a blueprint to build a house from somewhere, buys all the supplies in the open market on their own or through contractors, and hires contractors to build the house. However, custom homes are not meant for most people. It can take a LOT of your time and cost you a LOT more. It is far more efficient to use a volume home builder who produces homes with their own set of few blueprints, using mostly the same supplies of various grades for different price points and layouts, with their trained contractors on payroll on a regular basis. The speed and consistency of the volume home builders often exceed a custom home or the same price points because they have figured out the formula to survey the land, get permits, do blue print, get supplies, and hire and retain experienced, skilled contractors on a repeat basis. Call it cookie cutter but price goes lower and speed goes up for the same sq ft of home. Most people want that option. Don't we all want a much higher volume of Kubeflow deployments?

Kubeflow needs those volume home builders. DKube is one of the volume builders of Kubeflow in any cloud or on-prem. Please contact us for more info at info@dkube.io.