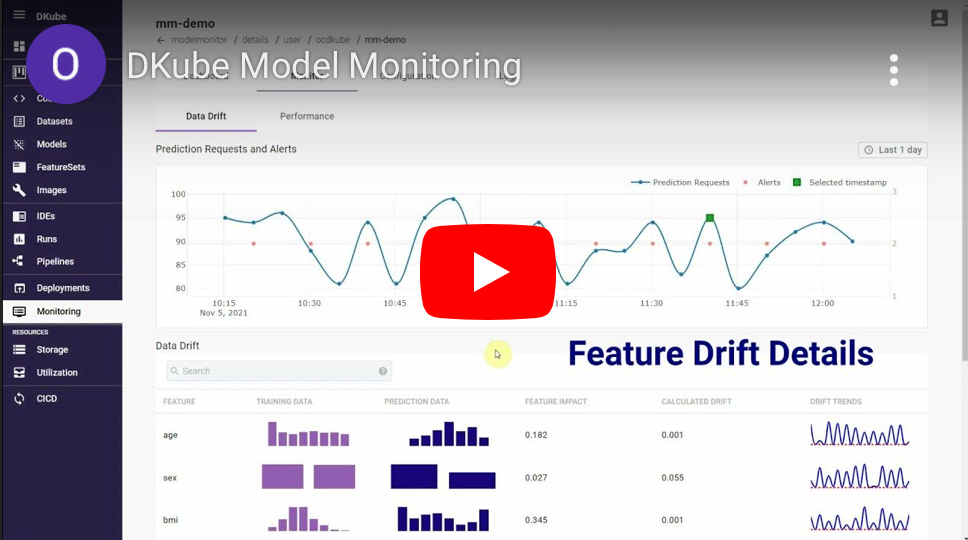

Want to learn how to monitor your models in production? The DKube platform integrates model monitoring into the overall system with DKube Monitor. It includes everything necessary for engineers and executives to identify how well your models are achieving their business goals - and facilitates a smooth workflow to improve them when necessary.