The Duchess of Windsor famously said that you could not be too rich or too thin. And whether or not that is correct, a similar observation is definitely true when trying to match deep learning applications and compute resources: you cannot have enough horsepower.

Intractable problems in fields as diverse as finance, security, medical research, resource exploration, self-driving vehicles, and defense are being solved today by “training” a complex neural network how to behave rather than programming a more traditional computer to take explicit steps. And even though the discipline is still relatively young, the results have been astonishing.

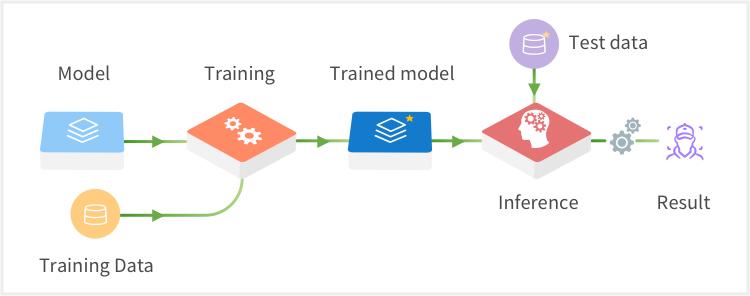

The training process required to take a Deep Learning model and turn it into a computerized savant is extremely resource-intensive. The basic building blocks for the necessary operations are GPUs, and though they are already powerful – and getting more so all the time – the kinds of applications identified will take whatever you can throw at them and ask for more.

In order to achieve the necessary horsepower, the GPUs need to be used in parallel, and there lies the rub. The easiest way to bring more GPUs to bear is to simply add them to the system. This scale-up approach has some real-world limitations. You can only get a certain number of these powerful beasts into a single system within reasonable physical, electrical, and power dissipation constraints. If you want more than that – and you do – you need to scale-out.

This means that you need to provide multiple nodes in a cluster, and for this to be useful the GPUs need to be shared among the nodes. Problem solved, right? It can be – but this approach brings with it a new set of challenges.

The basic challenge is that just combining a whole bunch of compute nodes into a large cluster – and making them work together seamlessly – is not simple. In fact, if it is not done properly, the performance could become worse as you increase the number of GPUs, and the cost could become unattractive.

Mellanox has partnered with One Convergence to solve the problems associated with efficiently scaling on-prem or bare metal cloud Deep Learning systems.

Mellanox supplies end-to-end Ethernet solutions that exceed the most demanding criteria and leave the competition in the dust. For example, we can easily see the performance advantages with TensorFlow over a Mellanox 100GbE network versus a 10GbE network, both taking advantage of RDMA in the chart below

RDMA over Converged Ethernet (RoCE) is a standard protocol which enables RDMA’s efficient data transfer over Ethernet networks allowing transport offload with hardware RDMA engine implementation, and superior performance. While distributed TensorFlow takes full advantage of RDMA to eliminate processing bottlenecks, even with large-scale images the Mellanox 100GbE network delivers the expected performance and exceptional scalability from the 32 NVIDIA Tesla P100 GPUs. For both 25GbE and 100GbE, it’s evident that those who are still using 10GbE are falling short of any return on investment they might have thought they were achieving.

Beyond the performance advantages, the economic benefits of running AI workloads over Mellanox 25/50/100GbE are substantial. Spectrum switches and ConnectX network adapters deliver unbeatable performance at an even more unbeatable price point, yielding an outstanding ROI. With flexible port counts and cable options allowing up to 64 fully redundant 10/25/50/100 GbE ports in a 1U rack space, Mellanox end-to-end Ethernet solutions are a game changer for state-of-the-art data centers that wish to maximize the value of their data.

The performance and cost of the system are the ticket in the door, but an attractive on-prem Deep Learning platform needs to go further; much further. You need to:

- Provide a mechanism to share the GPUs without users or applications monopolizing the resources or getting starved.

- Ensure that the GPUs are fully utilized as much as possible. These beasts are expensive!

- Automate the process of identifying the resources on each node, and making them immediately useful without a lot of manual intervention or tuning.

- Make the system easy to use overall, since experts are always the minority in any population.

- Base the system on best-in-class open platforms to enhance ease of use, and to enable rapid integration of better frameworks and algorithms as they become available.

Many of these challenges have been overcome for organizations that are able to offload their compute needs to the public cloud. But for those companies that cannot take this path for regulatory, competitive, security, bandwidth or cost reasons, there has not been a satisfactory solution.

In order to achieve the goals highlighted above, One Convergence has created a full stack Deep Learning-as-a-service application called DKube that addresses these challenges for on-prem and bare metal cloud users.

DKube provides a variety of valuable and integrated capabilities:

- It abstracts the underlying networked hardware consisting of compute notes, GPUs, and storage, and allows them to be accessed and managed in a fully distributed composable manner. The complexity of the topology, device details, and utilization are all handled in a way that makes on-prem cloud operation as simple as – and in some ways simpler than – the public cloud. The system can be scaled out by adding nodes to the cluster, and the resources on the nodes will automatically be recognized and be made useful immediately.

- It allows the resources, especially the expensive GPU resources, to be efficiently shared among the users of the application. The GPUs are allocated on-demand, and are available when not actively being used by a job.

- It is based on best-in-class open platforms, which make the components familiar to data scientists. DKube is based on the containerized standard Kubernetes, and is compatible with Kubeflow, supporting Jupyter, TensorFlow, and PyTorch – with more to come. It integrates the components into a unified system, guiding the workflow and ensuring that the pieces work operate together in a robust and predictable way.

- It provides an out-of-the-box, UI-driven deep learning package for model experimentation, training, and deployment. The Deep Learning workflow coordinates with the hardware platform to remove the operational headaches normally associated with deploying on-prem. Users can focus on the problems at hand – which are difficult enough – rather than the plumbing.

- It is simple enough for users to get the application installed on an on-prem cluster and be working with models within 4 hours.

At KubeCon + CloudNativeCon conference to be held in Seattle from Dec 10-13, 2018, you can see a demo of One Convergence DKube system using Mellanox high speed network adapters that enable Deep Learning-as-a-Service for on-prem platforms or bare metal deep learning cloud.